Ever seen a whale pretending to be a grass field? Or a sheep swimming in the ocean?

Of course not.

Some things just don’t fit.

But the software world is different. Here the four-eyed sheep can fly in space and no one will care. Until the moment it hits the ground.

“Oh my – this guy is talking about sheep and whales again…”

Relax. No whales this time. Instead, let me show you two architecture failure modes —

and one solution they both quietly ignore.

For our examples we will use Modbus an ancient way of exchanging data between machines—and one that still refuses to be replaced. Each device exposes a set of registers, read and written in a fixed, periodic loop.

Simple. Brutal. Effective.

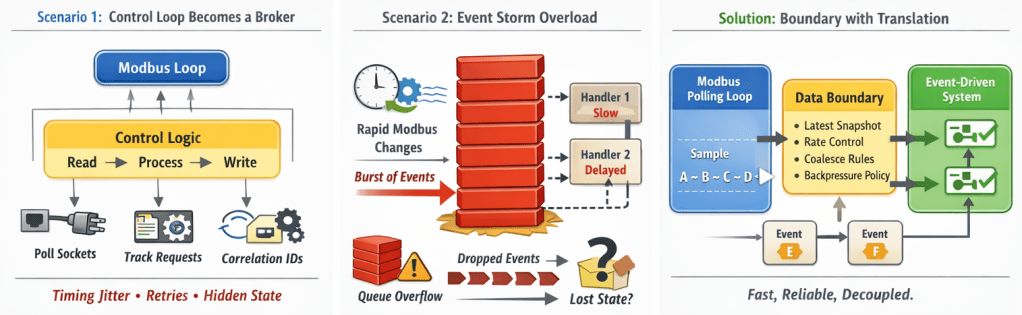

Scenario 1

We start clean. A Modbus system runs in a single deterministic loop:

read state -> process -> write state -> repeat

One day, a new requirement appears: the Modbus data must be sent elsewhere using a modern RPC protocol.

Without much thinking we start adding the communication logic into the main control loop. Suddenly alien constructions start to appear – retry counters, timestamps, acknowledge signals. Before we know we create a full-fledged message broker inside our simple loop.

Complexity grows.

Scenario 2

Now the opposite.

We start with a clean, event-driven environment. Requests, responses, handlers, queues. Perfect.

We add Modbus handling. “Easy,” we think.

“We’ll poll registers and emit events on change.”

It works… until signals start changing faster than the event system can digest.

Events pile up, updates get dropped or reordered, information is lost

And the more we try to solve it the more complex system becomes.

What happened?

In both cases we made the same fundamental mistake – we tried to bend the problem we were solving so it fits architecture that was already in place. We ignored the quiet signal saying:

“This does not belong here.”

There’s a simple rule—very much in the spirit of model-driven design:

Software should model the domain and its execution semantics.

For each domain, we must choose abstractions that fit naturally—without distortion.

The solution: a boundary with translation

The solution isn’t a smarter loop or a better event system.

It’s a boundary.

Keep each concern in its native execution model—and translate only at the edge.

On one side, a deterministic polling loop:

- Read registers

- Process state

- Write registers

- Repeat at a fixed rate

On the other side, an event-driven system:

- Requests

- Handlers

- Queues

- Backpressure

The boundary translates stable state from the deterministic world into meaningful change for the event-driven world.

No retries in the loop.

No event queues pretending to be registers.

No execution model impersonating another.

Each side runs the way it was designed to run.

Getting there isn’t a technical trick—it’s a change in how you think about the problem.

Not:

“What’s the fastest way to implement this feature?”

But:

“What is the domain—and how does it naturally execute?”

Follow that, and things fall into place.

Sheep stay on grass.

Whales stay in the ocean.

And systems quietly become what they’re supposed to be.

You must be logged in to post a comment.